When DeepSeek R1 landed in January 2025, the reaction in AI circles was not enthusiasm. It was something closer to discomfort. The model performed at a level that placed it in direct competition with OpenAI’s o1 and Anthropic’s best reasoning models — at a reported training cost that was, depending on which figures you accepted, between five and fifty times lower than what American labs had spent on comparable systems. The discomfort was not about a Chinese competitor building a good AI model. It was about what that model’s existence implied about the assumptions the entire industry had been operating on.

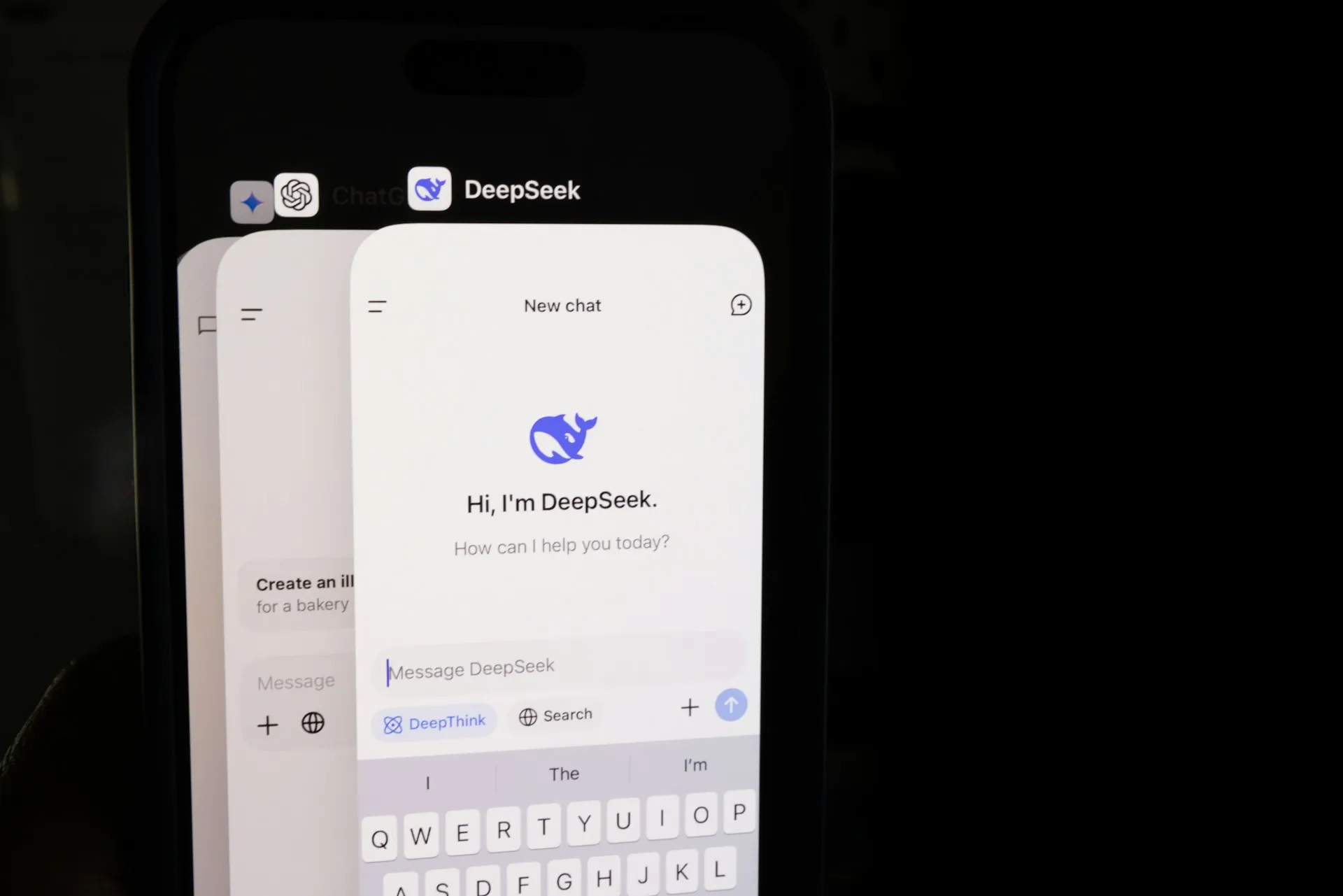

What DeepSeek is, before the hype

DeepSeek is an AI research lab founded in 2023, backed by the Chinese quantitative hedge fund High-Flyer. Unlike most AI labs that emerged from big tech infrastructure — OpenAI from Microsoft’s orbit, Anthropic from ex-OpenAI researchers, Google DeepMind from Alphabet — DeepSeek started with deep quantitative finance expertise and a stated commitment to fundamental research rather than product-led development. This origin matters because it shaped the lab’s approach: research rigor over product velocity, architectural innovation over compute scaling.

The models that made DeepSeek’s name are the V series (V2, V2.5, V3) and the R series. The V models are general-purpose language models optimized for performance-per-cost. The R models are reasoning-specialized models — designed to think through problems step by step before responding, comparable in architecture to OpenAI’s o series. It is the R1 model that generated the January 2025 shockwave, and understanding why requires understanding what it cost to build.

The cost disruption: what the numbers actually mean

DeepSeek R1’s reported training cost of approximately six million dollars — compared to estimates of one hundred million or more for comparable American frontier models — was the figure that made the headlines. The figure requires context to interpret correctly, and the AI industry has been arguing about that context since the day it was published.

The lower training cost does not mean DeepSeek found a magical efficiency that other labs missed. It reflects a combination of factors: architectural choices that reduce compute requirements for a given performance level, differences in what is being counted (pre-training versus fine-tuning versus full training run costs), and access to compute that may be structured differently than Western cloud pricing. The figure is real; its direct comparability to Western training cost estimates is contested.

What is not contested is the performance. DeepSeek R1 on reasoning benchmarks — mathematics, code generation, logical problem solving — performed at a level that placed it among the global top tier. Whether it was achieved at exactly six million dollars or at three times that cost, the gap with American models’ training economics remained striking enough to force a genuine reassessment of the cost assumptions underlying frontier AI development.

The architecture innovation: mixture of experts at scale

DeepSeek’s models are built on a Mixture of Experts (MoE) architecture executed with an efficiency that distinguishes them from earlier implementations. The core principle — activating only the subset of model parameters relevant to each specific query rather than the entire network — reduces the effective compute required per inference substantially.

DeepSeek V3, for example, uses 671 billion total parameters but activates only 37 billion for any given query. The practical effect is frontier-class performance at inference costs closer to a much smaller model. For organizations running high-volume content operations — millions of API calls per month, as described in Generative AI news: the trends transforming content creation — this cost structure is not an academic point. It changes which AI-assisted content workflows are economically viable.

The MoE architecture also informed the broader industry’s direction: multiple major labs accelerated their MoE development timelines after DeepSeek’s results demonstrated the approach’s scalability at the frontier.

The Open-Weight release: the decision that changed the game

DeepSeek’s decision to release R1 as an open-weight model — allowing anyone to download and run the model weights on their own infrastructure — is the decision with the longest-lasting consequences. It meant that any organization with sufficient compute could run a frontier-class reasoning model without paying per-query API fees, without routing data through a third-party provider, and without accepting any of the usage policy constraints that commercial API providers impose.

For enterprises with data sovereignty requirements — healthcare organizations, financial institutions, government contractors — this was immediately significant. The usual trade-off between capability and deployment control had been, for reasoning-class models, sharply unfavorable: the best reasoning was only available through APIs that required sending data to external servers. DeepSeek R1’s open weights collapsed this trade-off. High-capability reasoning, on-premises, under full organizational control, became available.

The sovereignty dimension connects directly to the content production context. News organizations, legal publishers, and financial content operations that handle sensitive or embargoed material before publication have specific reasons to prefer on-premises AI deployment. DeepSeek R1 made a frontier-capable option available to them that did not previously exist.

The geopolitical dimension: what it means and what it does not

DeepSeek is a Chinese company. Its models are developed and trained in China. This creates a set of legitimate considerations that are distinct from the model’s technical capabilities.

The data handling question is real: organizations routing queries to DeepSeek’s commercial API are sending data to infrastructure governed by Chinese law, which includes provisions for government data access that differ materially from US or EU legal frameworks. For content operations handling sensitive material, this is a genuine compliance concern, not a reflexive geopolitical reaction.

The open-weight release partially addresses this concern — running DeepSeek models on your own infrastructure means your data never leaves your environment. But it does not address all concerns: the training data and the fine-tuning choices embedded in the model weights remain opaque in ways that Western open-weight models, operating under greater regulatory and press scrutiny, are somewhat more transparent about.

The honest assessment is that DeepSeek’s models are technically impressive, cost-effectively competitive, and present a genuine compliance evaluation requirement for enterprise deployment — not an automatic disqualification, but a documented decision that requires organizational governance sign-off.

DeepSeek for content AI: where it actually fits

For content practitioners evaluating DeepSeek’s models practically, the strongest use cases align with the R1 model’s reasoning strengths: research synthesis, structured analysis, complex editorial planning, and code-assisted content operations. For pure content generation — blog posts, marketing copy, social media — the performance difference between DeepSeek and frontier American models is small enough that cost and sovereignty factors dominate the decision.

The multilingual dimension, examined in more detail in Qwen3 ASR flash: why this AI model is getting attention for the audio domain, is similarly relevant here: DeepSeek’s performance on Chinese-language content tasks is strong in ways that Western models cannot match at comparable cost levels.

For content teams building AI pipelines on open infrastructure — the kind of edge-deployed, sovereignty-aware architecture described in LLM news: the new models changing AI right now — DeepSeek R1 is a serious option that should appear in any honest evaluation. Excluding it because of its origin without engaging with the specific governance questions it raises is not a risk management decision. It is a reflex dressed as a policy.

DeepSeek’s significance is not that it proved a Chinese lab could build a competitive AI model. That was already understood. Its significance is that it demonstrated the cost assumptions underlying frontier AI development were contingent rather than necessary — that the expensive way was not the only way. That demonstration changed the industry’s internal conversation about what is possible at what cost, and those conversations are still producing consequences.

For broader context on the model landscape DeepSeek has disrupted, see LLM news: the new models changing AI right now and Generative AI news: the trends transforming content creation. For another emerging model worth similar analytical attention, read Sensunova AI: a new model you should watch closely.

The question DeepSeek forces onto every AI procurement and governance team: Your organization has a policy for evaluating AI models. Does it ask the right questions about capability, cost, and governance — or does it stop at country of origin, and if so, can you explain why that is sufficient?